Previous post on Getting Smart

Not long ago, artificial intelligence in education felt novel. It was something shiny, experimental, and, for many educators, possibly unsettling at times. When ChatGPT arrived in November 2022, the initial conversations and concerns were more focused on fear. I recall receiving emails, text messages, phone calls, and visits from educators who were concerned about cheating, plagiarism, lost skills, and what instantly felt like an overwhelming pace of change. It was something else to adjust to, not long after the overwhelming feeling that many felt in March of 2020.

But since that initial adjustment to the increased use of AI in our world at the end of 2022 and through 2023, I’ve seen a shift happening. At first, there was skepticism, uncertainty, and hesitation, and not just in the world of education. However, as we’ve continued to adjust to new tools and new ways of working, I’ve noticed a shift from considering AI as a “what if” to the acceptance that AI is here and its use is increasing. It’s embedded in tools educators already use, and if it hasn’t already, then it will potentially slowly but surely become part of the daily routine and workflow of teaching and learning.

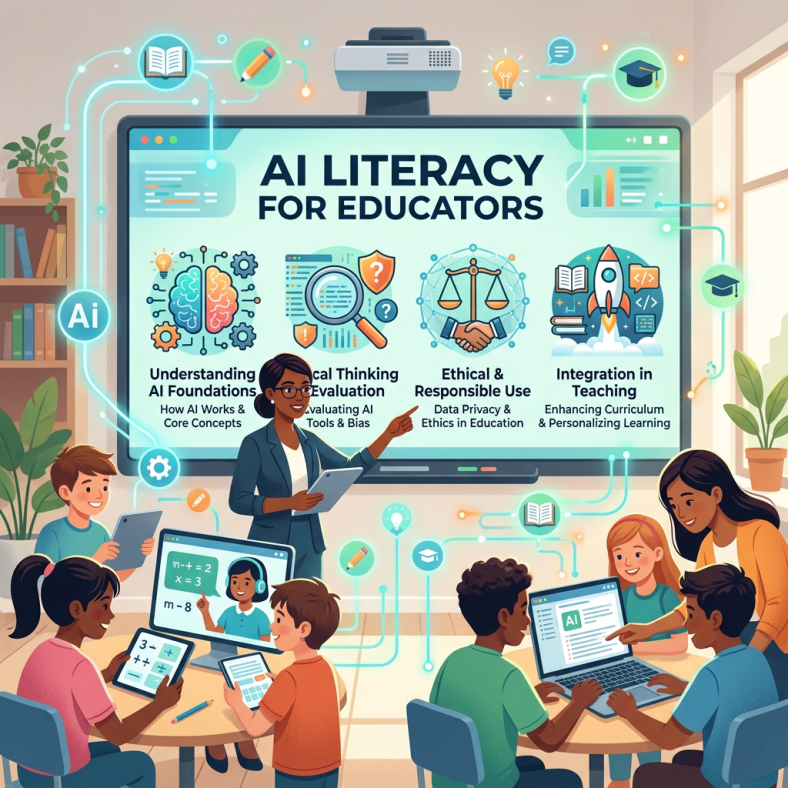

I’ve spoken about this shift from novelty to normalcy and how it brings a new challenge: educator upskilling.

A few years ago, I started researching the training available to educators and other professionals in AI. At the end of 2023, 87% of the educators in the United States had not received any training. In my workshops, some attendees are having their first training experience, more than 3 years after ChatGPT made its debut. So I think that we need to focus on an important question, whether in education or not. The question is no longer whether educators need professional learning around AI. Most people agree that they do. The bigger issue is whether we are approaching AI professional development in ways that are deep, sustained, and human-centered, or whether we’re still experiencing the one-and-done sessions that barely scratch the surface. With AI and the pace of change in education and the world, we need to do better and be prepared.

Shifting to Ongoing Capacity Building

When I completed my doctorate nearly two years ago, my research focused heavily on professional learning in emerging technologies, with a strong emphasis on AI. Even then, the message was clear. A single PD session, or even a series of short, tool-based trainings, was not enough, especially if completed early in the year or during a limited time span.

Yet, that is what I am learning about how AI PD is structured today. Through surveys in my sessions and conversations with other educators, there is a common experience happening, which is:

- A 30-minute overview.

- A 15-minute “certified educator” badge.

- A walkthrough of one tool done well.

While these experiences can be helpful, especially for getting started and when time is limited, in the long term, they don’t build AI literacy. They build familiarity, whether with AI concepts or an AI tool. But familiarity is not AI literacy. Not for us as educators, nor for the students we are preparing for a future surrounded by AI and a world of work that seeks employees skilled in AI.

Continue reading the original post on Getting Smart.

About Rachelle

Dr. Rachelle Dené Poth is a Spanish and STEAM: What’s Next in Emerging Technology Teacher. Rachelle is also an attorney with a Juris Doctor degree from Duquesne University School of Law and a Master’s in Instructional Technology. Rachelle received her Doctorate in Instructional Technology, with a research focus on AI and Professional Development. In addition to teaching, she is a full-time consultant and works with companies and organizations to provide PD, speaking, and consulting services. Contact Rachelle for your event!

Rachelle is an ISTE-certified educator and community leader who served as president of the ISTE Teacher Education Network. By EdTech Digest, she was named the EdTech Trendsetter of 2024, one of 30 K-12 IT Influencers to follow in 2021, and one of 150 Women Global EdTech Thought Leaders in 2022.

She is the author of ten books, including ‘What The Tech? An Educator’s Guide to AI, AR/VR, the Metaverse and More” and ‘How To Teach AI’. In addition, other books include, “In Other Words: Quotes That Push Our Thinking,” “Unconventional Ways to Thrive in EDU,” “The Future is Now: Looking Back to Move Ahead,” “Chart A New Course: A Guide to Teaching Essential Skills for Tomorrow’s World, “True Story: Lessons That One Kid Taught Us,” “Things I Wish […] Knew” and her newest “How To Teach AI” is available from ISTE or on Amazon.

Contact Rachelle to schedule sessions about Artificial Intelligence, AI and the Law, Coding, AR/VR, and more for your school or event! Submit the Contact Form.

Follow Rachelle on Bluesky, Instagram, and X at @Rdene915

**Interested in writing a guest blog for my site? Would love to share your ideas! Submit your post here. Looking for a new book to read? Find these available at bit.ly/Pothbooks

************ Also, check out my THRIVEinEDU Podcast Here!

Join my show on THRIVEinEDU on Facebook. Join the group here.